There was a time when product analytics was enough.

Product managers would log in to dashboards, squint at conversion funnels, and take note of yesterday’s trends. A spike in drop-off here. An uptick in retention there.

Insights, yes — but always late, always lagging.

This wasn’t a failure of the teams using the tools. It was a limitation of what the tools were built for. Traditional product analytics is designed for reflection, not reaction. And in today’s product landscape, that delay is costly.

Because the companies winning today aren’t just analyzing behavior. They’re responding to it — as it happens.

The shift: From insight to action

Modern digital products are no longer static. They’re expected to adapt and evolve in real time — responding to users based on what they’re doing right now, not what they did last week.

A customer is browsing a pricing page for the third time in a day? Trigger a chat from sales.

A player is stuck on a level? Offer a hint.

A shopper pauses at checkout? Flash a dynamic discount.

These aren’t marketing moments. They’re product experiences — and they require intelligence embedded within the product itself.

That’s where the shift from traditional product analytics to real-time product operationalization begins.

And it’s not just about flashy personalization. It’s about performance. Retention. Growth. When your product can act on behavioral data, not just track it, you create meaningful, measurable outcomes.

Why analytics tools fall short

Let’s be clear: product analytics tools like Amplitude, Mixpanel, and Heap have been game-changing. They’ve democratized insights. They’ve helped teams A/B test, refine onboarding flows, and diagnose UX friction.

But they all share a fundamental limitation: they are built to answer what happened? Not, what should we do right now?

These platforms live outside your product. They process data in batches. They’re great at surfacing patterns — but they don’t power the product itself.

If you want to analyze the data, build adaptive interfaces, serve real-time recommendations, or deploy customer-facing AI agents, you need something else.

Something faster. Something closer to the product experience.

You need infrastructure that turns behavioral data into live, contextual intelligence.

Snowplow Signals: Real-time intelligence for product teams

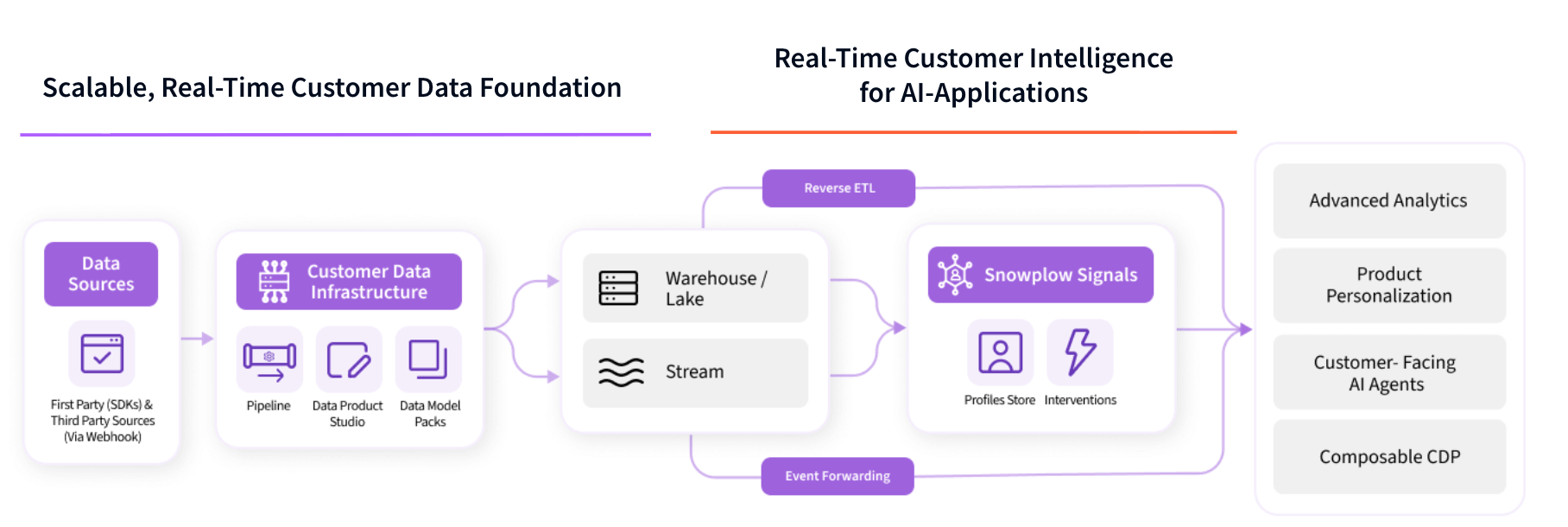

Enter Snowplow Signals — a real-time customer intelligence system built specifically for product and engineering teams.

Signals is not another analytics tool. It’s an operational layer that sits on top of your Snowplow data pipeline and transforms behavioral data into live signals your applications can use.

It does this through three key components:

1. Profiles store

A low-latency API that provides your front-end, backend, or AI agent with real-time and historical user attributes. It merges in-session activity with warehouse-derived traits — everything from recent page views to machine learning scores.

So your app knows that the user browsing your pricing page is in a trial, high-fit account, and in their second session today — then tailor the experience accordingly.

2. Interventions engine

Instead of waiting for humans to interpret data, the Interventions Engine pushes product actions in response to behavioral triggers. It detects meaningful signals (like hesitation, rage clicks, drop-off risk) and pushes timely nudges, in-app prompts, or custom UI logic to address them.

It’s automation without the guesswork. Logic you define; signals you trust.

3. Developer-First Tooling

Everything in Signals is designed to fit into an engineering workflow. Git-backed configurations. SDKs in TypeScript and Python. CI/CD-friendly.

You don’t need to adopt a new dashboard paradigm — you stay in your stack, with full control over how your product responds to user behavior.

This is personalization infrastructure for engineers. Built not to abstract data—but to activate it.

Why AWS is a natural fit — but not the only one

For teams already operating in the AWS ecosystem, this all becomes even more powerful.

With services like Amazon Kinesis, S3, Redshift, SageMaker, and Bedrock, AWS offers a flexible, scalable environment for real-time event data, model training, and personalization.

And Signals is designed to slot directly into that environment.

But it’s important to say: AWS isn’t a requirement. Snowplow runs on GCP, Azure, or your own infrastructure. However, for the many teams already invested in AWS, using Snowplow Signals in that ecosystem means:

- Seamless streaming ingestion via Kinesis.

- Low-latency storage and backup via S3.

- Powerful model training via SageMaker, using warehouse data enriched by Snowplow.

- Dynamic recommendations, fed by real-time user context from Signals.

- Secure governance and compliance using VPCs, IAM, and KMS — keeping everything within your control.

Instead of treating behavioral data as just something to store and analyze later, this approach turns AWS into an engine for real-time experience delivery.

A glimpse into what’s possible

Let’s bring this to life.

HelloFresh: From fragmented signals to a unified data engine

For HelloFresh, data has never been a side project — it’s at the heart of the company’s mission to change the way people eat.

But even with a strong culture of measurement and experimentation, the team found itself limited by the constraints of traditional analytics tools.

Fragmented systems, black-box dashboards, and high-latency pipelines made it nearly impossible to respond to customer behavior in the moment.

So they rebuilt their data foundation.

HelloFresh shifted to a first-party data strategy powered by Snowplow on AWS and centralized insights in Snowflake. Events now land in the company’s platform in under five seconds, flowing from AWS S3 through Snowflake’s bronze-silver-gold Medallion layers.

Suddenly, what used to take 36 hours now happens in real time.

More than just speed, the move brought accuracy — 95% parity with backend systems — and transparency. The operations team fine-tunes logistics based on behavior, the product team tests new features faster, and marketing can personalize campaigns without guesswork.

With Snowplow’s AI-ready data, HelloFresh is now building toward real-time experiences that learn and adapt with every click.

“The first significant benefit was the near real-time processing. Over 99% of events were available in under five seconds.”

— David Castro Gavino, Global VP of Data, HelloFresh

Supercell: Powering live experiences at scale

For mobile gaming giant Supercell, the stakes are high. Each new product feature, in-app offer, or matchmaking tweak touches millions of players in real time. Static dashboards and delayed queries weren’t enough.

Supercell adopted Snowplow to move past traditional product analytics. The team’s goal wasn’t just to understand what players were doing — it was to operationalize that understanding inside the game itself.

Real-time behavioral data feeds into internal tools that adapt user journeys, flag potential churn, and optimize engagement dynamically.

With hundreds of event types streaming through their pipeline, Supercell’s product teams are equipped to build, test, and iterate faster than ever—without being constrained by vendor black boxes.

They’ve built their own feedback loops, and their games feel smarter, stickier, and more personal because of it.

What makes signals different

You may be wondering: why not use a customer data platform (CDP)? Why not just integrate a recommendation engine?

It comes down to control, transparency, and real-time capability.

CDPs like Segment or Tealium offer audience segmentation and activation — but they aren’t built for product use cases.

They prioritize marketing channels, not in-product personalization. They operate on batch data, lack real-time computation, and often rely on opaque decision logic.

Signals, by contrast, gives you:

- Full transparency into how attributes are calculated.

- Real-time updates that respond to in-session behavior.

- Flexible modeling of user attributes — combine in-stream and warehouse data.

- Ownership of your data — run everything in your own cloud, on your terms.

- Developer-first design—no proprietary UI or abstraction layers that hide the data or lock you in.

For product and engineering teams, this isn’t just more flexible. It’s liberating.

The strategic implications

Let’s zoom out.

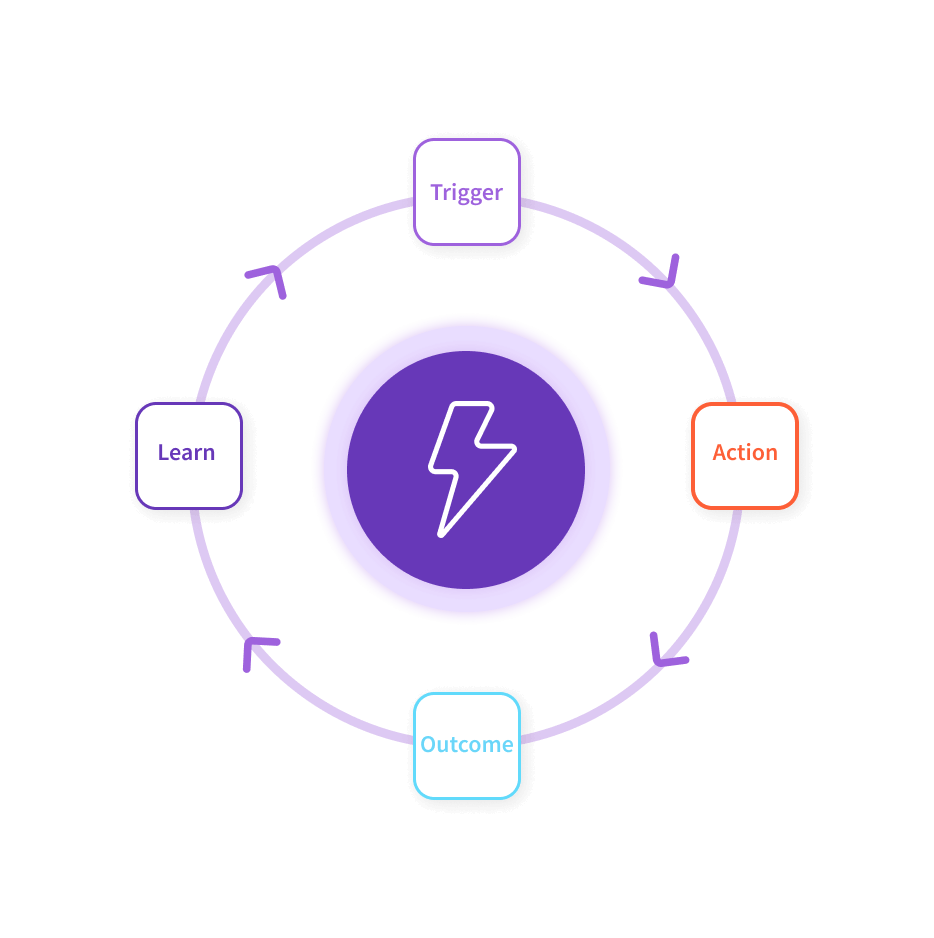

Signals doesn’t just make your product smarter. It makes your organization more agile.

When data flows cleanly from event capture to action — with a shared behavioral foundation between product, engineering, AI, and marketing teams — you remove bottlenecks. You stop waiting on delayed dashboards. You move from reacting quarterly to responding instantly.

And when your infrastructure supports learning at the edge, every product interaction becomes a moment to improve — not just something to measure later.

That’s not just a better product.

That’s a competitive advantage.

Where to start

If you want to start collecting behavioral data with Snowplow — or are thinking about how to bring richer signals into your applications — Signals is a natural next step.

If you’re building in AWS or something similar, the path is even clearer. You already have the building blocks. Signals just connects them.

Start with a use case:

- Real-time recommendations for your app.

- In-product nudges or AI chat triggers.

- Dynamic onboarding experiences.

- Context-aware search.

Then think about where your behavioral data lives — and how fast it can move.

Snowplow Signals bridges the gap. It brings your data to life inside your product.

Final word: From reactive to responsive

The era of static products is ending. The companies thriving today are building products that adapt, action, and learn.

They’re not just watching behavior.

They’re shaping it.

They’re owning it.

They’re operationalizing it.

If you're ready to stop waiting for dashboards and start building responsive, intelligent experiences — Snowplow Signals is worth a look for your foundation.

It’s not just the next step in analytics.

It’s the beginning of a smarter product future.

Follow us on LinkedIn

Follow us on LinkedIn