Preface

Something sweeping is unfolding right now. A convergence of intent, thought, action, and memory behind a blinking cursor: the prompt.

Behind this flashing marker, like the one Neo saw in ‘The Matrix’, sits atop a model of staggering capability. Its goal is not merely to explain the world, but to run it.

This is the moment AI stops being a tool and starts becoming the interface to everything.

The interface to everything

Among the avalanche of AI announcements on LinkedIn and X, it’s easy to become numb to the overwhelming noise all vying for your attention.

But something made me pause for a moment: OpenAI’s latest release for ChatGPT for Business.

It’s easy to miss just how profound this is; the features are hidden behind an enterprise licence, which means the masses are currently oblivious. But it’s a tectonic shift.

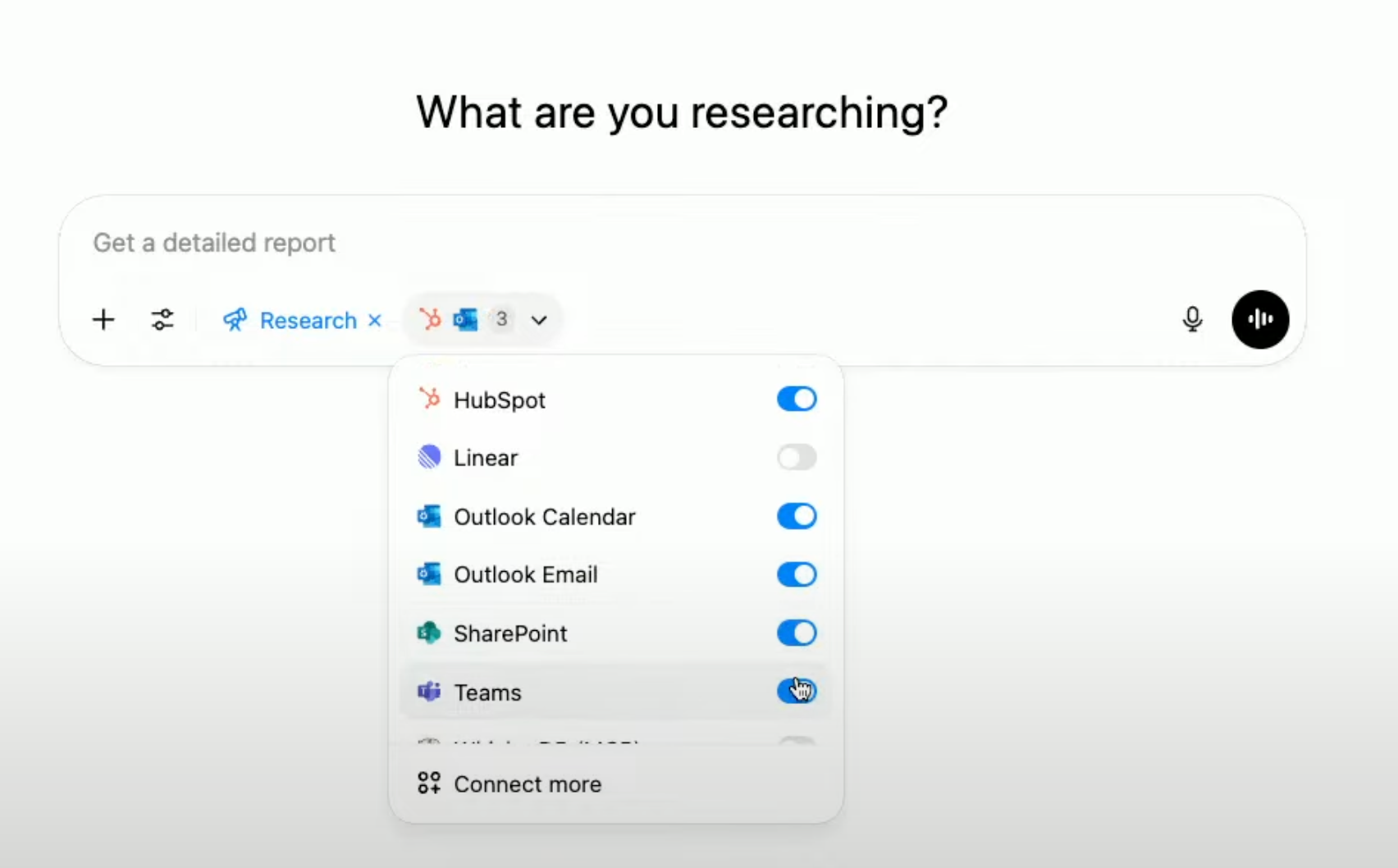

ChatGPT for Business now:

- Connects to your knowledge bases: Google Drive, SharePoint, Dropbox

- Connects to your core operational tools: HubSpot, Outlook, Teams, Linear

- Connects to ANY line of business app that has an MCP server

- Records and learns from conversations: sales calls, project reviews, customer meetings

Each of these on its own is significant. Together, they’re tectonic.

Open AI and its tools like ChatGPT are no longer just an assistant. OpenAI is evidently preparing to be the default interface for work. Contextual; agentic; persistent - exactly how one would want their tools.

The fragmentation we’ve tolerated in our tools (copying outputs from ChatGPT to Word, Jira, Notion) starts to collapse. And in that collapse, entire SaaS categories are at risk.

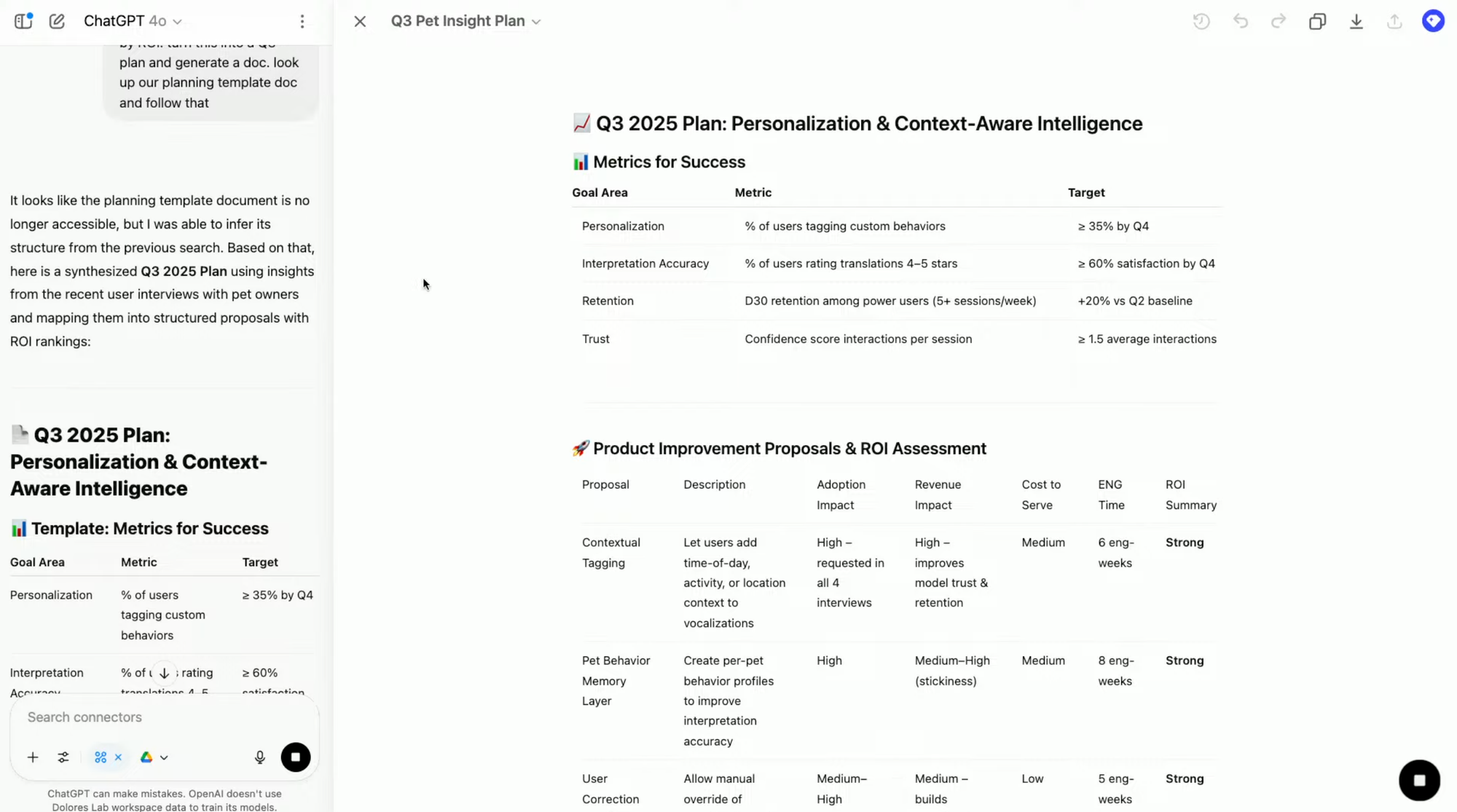

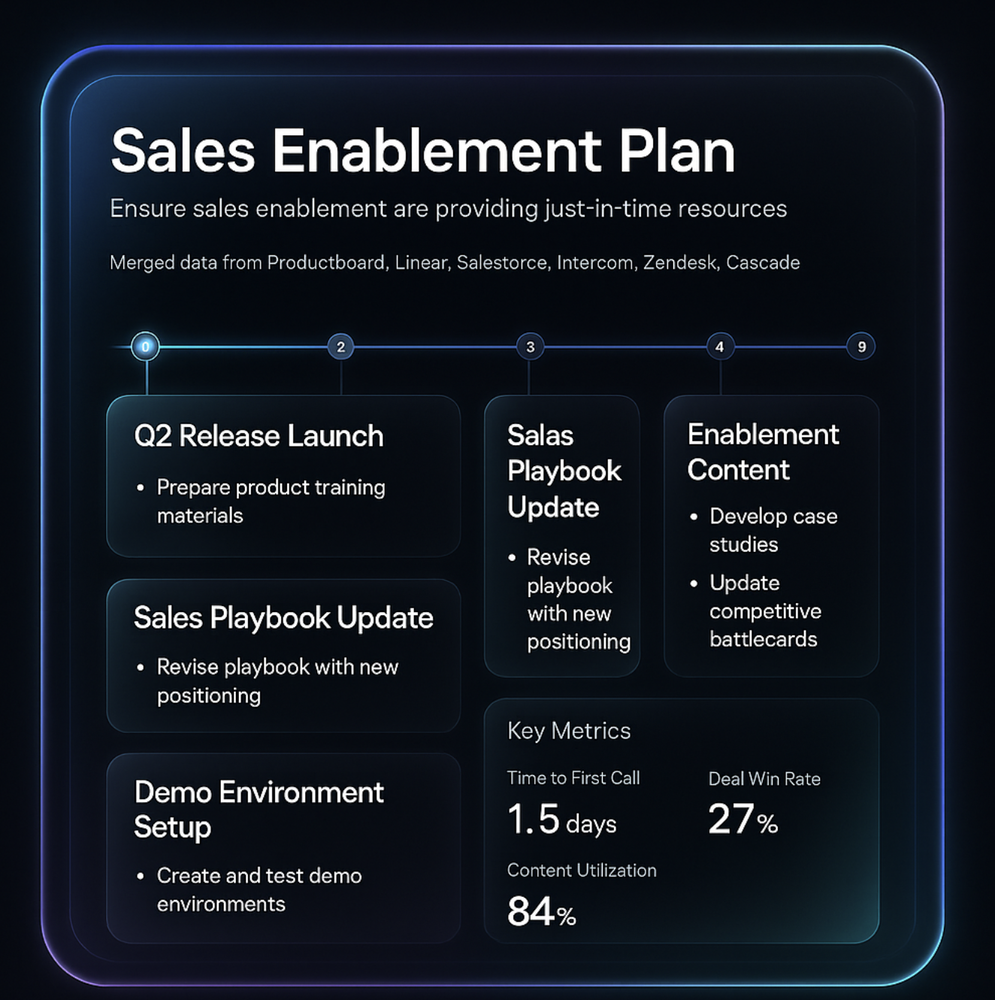

Just look at this Q3 2025 product plan which was created using:

- A template and multiple documents from Google Drive, and

- Pipeline and sales data from HubSpot:

If it were me, I'd have also included Linear to illustrate that true cross-functional GTM alignment can be done directly from a prompt.

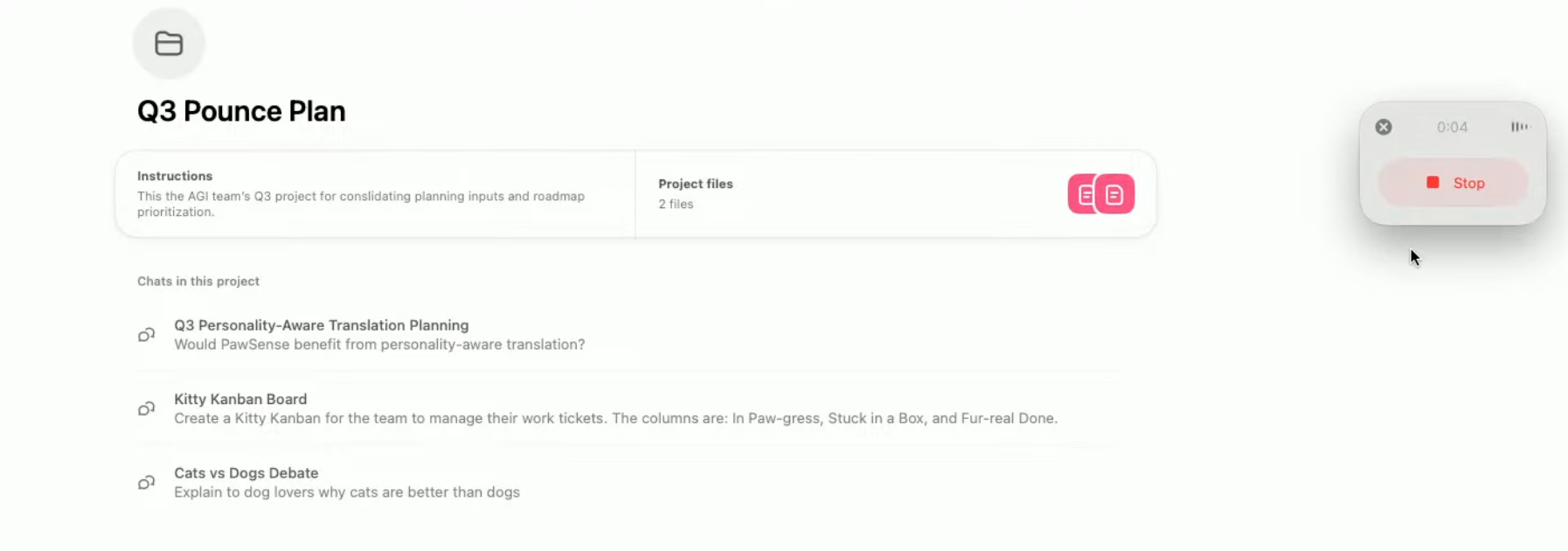

As if that was not enough, OpenAI then did a 'one more thing' moment. Showing the new ‘Record’ function:

A function that means one can now record any meeting, calls without a bot on the call.

Not only that, you can query across all call transcripts and all system data for signals. Natively being able to garner insights that simply wouldn’t be possible with endless networks of siloed apps today.

With these the new breakthrough capabilities, the fact that the average company has >100 SaaS apps will become less of a problem.

The more profound question is: how many of those apps will even be relevant any more?

Sherlocked

In the language of the geekerati, this is known as being ‘sherlocked’. Apple did it with Sherlock, rendering Watson obsolete. OpenAI is doing the same quietly, but much more broadly.

Any product that:

- Aggregates knowledge

- Assists with workflows

- Wraps context around content

…is now within range. All of the following meet at least one of these criteria:

- Sales enablement tools like Clari

- Meeting AI assistants like Fireflies, Fathom

- Internal search tools like Glean, Hebbia

- Productivity apps like Notion, Coda

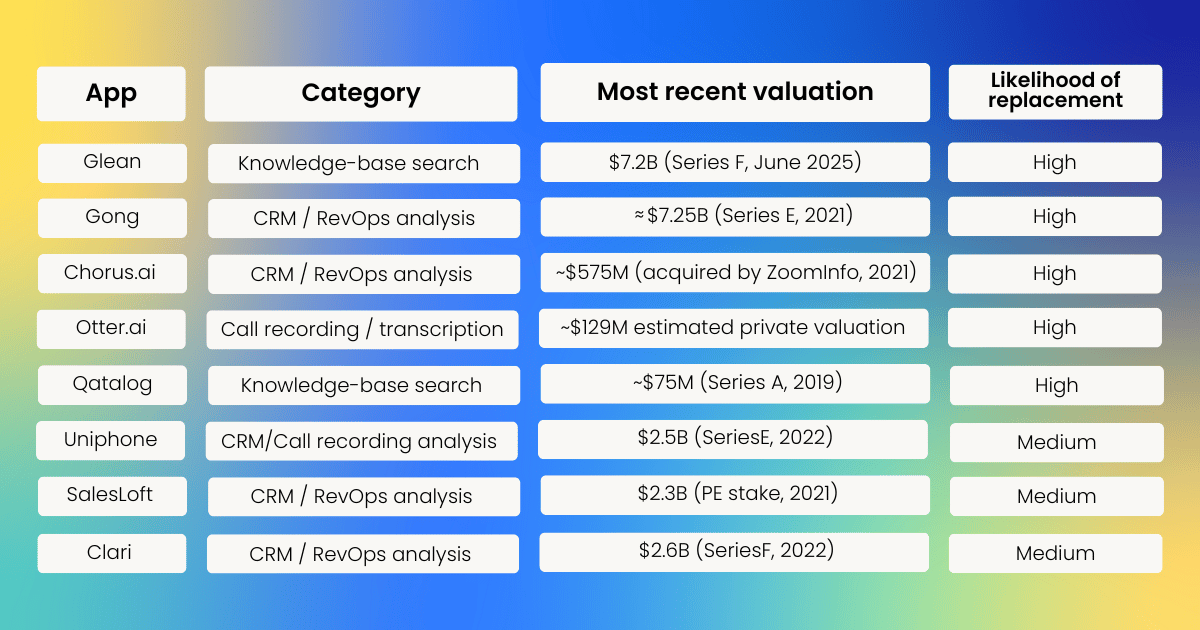

ChatGPT now does this natively; in plain English; with memory. Unicorns will not escape unharmed either:

This isn't a feature drop; it's a mic drop of epic proportions.

These capabilities form the foundation for a latent AI operating system. One that remembers what you’ve said in meetings, connects it to your roadmap, your CRM, and helps you write the investor update. All within the same flow.

OpenAI is quietly building the productivity backbone of the next decade.

So what’s next?

Short-term: Beyond the ad hoc

Right now, most ChatGPT usage is ad hoc:

- Prompt for a blog post

- Summarise a meeting

- Draft a cold email

- Debug a snippet of code

But these are all one-off interactions. If, like me, you use ChatGPT for recurring product workflows, like competitor research, persona generation, and market analysis, you’ll find yourself recreating prompts or exporting outputs elsewhere.

It’s friction that doesn’t need to exist.

The canvas of productivity

Canvas is a step forward – an inline document editor with prompt integration. But it remains limited. Serious workflows still require a handoff to Miro, Figma, Word, or Notion.

That’s suboptimal, and more importantly, has people leaving OpenAI's powers of influence

Medium term: The wrapper reckoning

As mentioned, with the ‘Record’ capability, ChatGPT almost overnight suggests the business model for 100+ tools focused on minutes, meeting recordings are in serious doubt.

Similarly, any product that has widespread need or use, is a wrapper above the AI model, and is making serious revenue, is going to be under threat. It’s not just a question of when. It’s incumbent upon OpenAI/Anthropic to do so in terms of ROI for their multi-year billion dollar investments and research.

There are multiple app categories, which I think will be at risk in the medium term, where:

- They effectively provide a specific function above an AI model that is widely used

- They’ve proven PMF and have high growth ARR

Essentially, when conceiving ideas, you need to go for the most niche of niche products that will make for a good return and a healthy business. But the more you build a product that is an AI wrapper for anything generic that has a very high revenue potential, the more risky that business model is likely to be.

Apps that meet the criteria of generic and high growth are:

- AI Video/Editing tools - Runway

- AI Image Gen - Midjourney

- AI Search and Knowledge - Glean, Hebbia, Dashworks

- AI Customer Support - Intercom, Drift, Ada

But the one that I think will be first is the ‘Vibe Coding’ category of product.

Vibe coding: AI app builders

While I think these apps are nice for doing prototyping, I think that they remain a year or two away from true no code platforms that enable anyone with an idea to make it happen.

There’s just nowhere near enough intuitive connective glue with all of the back end elements required for a proper app. But they’re somehow making an incredible amount of money:

- Bolt – £40M ARR

- Lovable – £75m ARR

- Replit – £100m+ ARR

With Codex, OpenAI already provides a lot of the things that apps like these and Cursor provide. With the pre-configured MCP connectors, some enhancements to connect it to services like supabase, and a front end visual editor, you’d have an even better capability than what exists today in Vibe Coding apps.

Codex becomes the foundation for OpenAI’s native app builder — not just for writing code, but for creating full applications, end-to-end, with agents running under the hood.

Using the new MCP-based connectors introduced in June 2025, Codex will go far beyond vibe coding tools like Lovable or Replit by stitching together real-world services like Supabase, Stripe, HubSpot, Linear, and Highspot — dynamically, with zero setup templates.

You’ll be able to say: “Build me an internal GTM platform with integrated product, marketing, and sales agents that stay in sync.”

And Codex will not only create the UI, backend, and workflows – it will embed agents that autonomously align product updates with sales playbooks, publish enablement content to Highspot, sync back to Linear, and update CRM workflows in HubSpot.

All without copy-pasting APIs or fiddling with logic flows.

Where vibe coding tools scaffold apps, Codex will deliver living systems – goal-driven, multiagent, and fully integrated with your GTM stack.

Generic, horizontal tools with high revenue are most at risk.

Niche, deeply embedded products will survive.

If you’re building, skew toward the latter.

Long-term: The dawn of the AI operating system

While it’s been quiet publicly on the Jony Ive and OpenAI front, the acquisition of Jony’s company, LoveFrom, was clearly years in the making. Sam Altman and Jony Ive weren’t talking design systems; they were talking paradigm shifts.

Because real strategy, especially in an era as fluid as AI, takes time. And Jony, who helped define the modern era of computing through his work at Apple, wasn’t brought in to reskin interfaces. He was brought in to help invent new ones.

To understand what’s coming, it helps to look back.

Apple didn’t invent the graphical user interface, the mouse, or the touchscreen. Those innovations came from Xerox PARC and academic labs. But Apple did what few others could: it reimagined how humans interact with information. The mouse enabled the GUI. Multitouch made the smartphone intuitive.

Each leap wasn’t just a hardware upgrade; it was a shift in the model of personal computing. The command line gave way to icons. Icons gave way to touch. And now, touch is giving way to something even more elemental: language.

Today, we access generative capabilities through input boxes, keyboards, and apps designed in the internet age; not the Agentic AI age. These interfaces were built for documents, clicks, and resizing.

But AI is fluid, contextual, agentic. It doesn’t belong inside rigid UIs. ChatGPT feels like magic until you’re forced to copy its output into Notion, format it in Word, or paste it into Jira. Context is lost. The thread breaks.

The interaction model is fundamentally misaligned with the intelligence it’s trying to support.

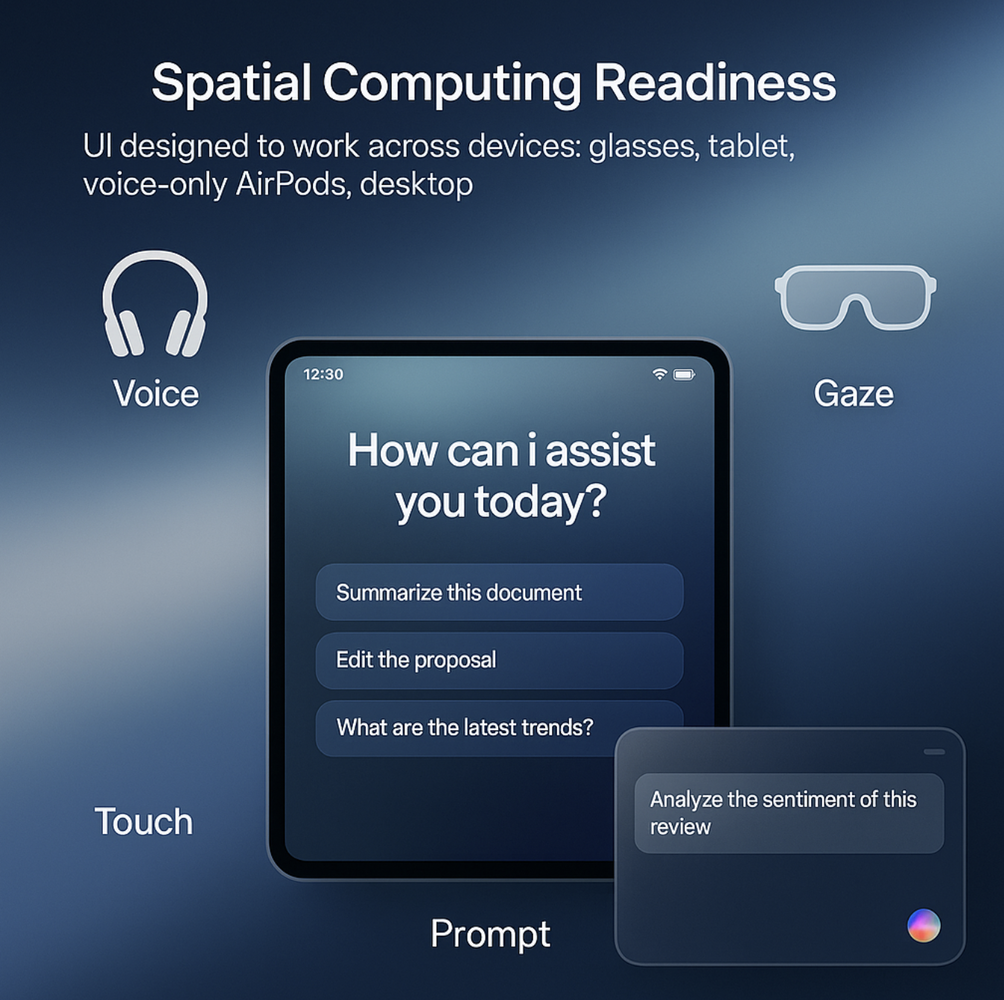

What comes next is not an app. It’s an entirely new class of computing: one where the interface adapts to the user, not the other way around.

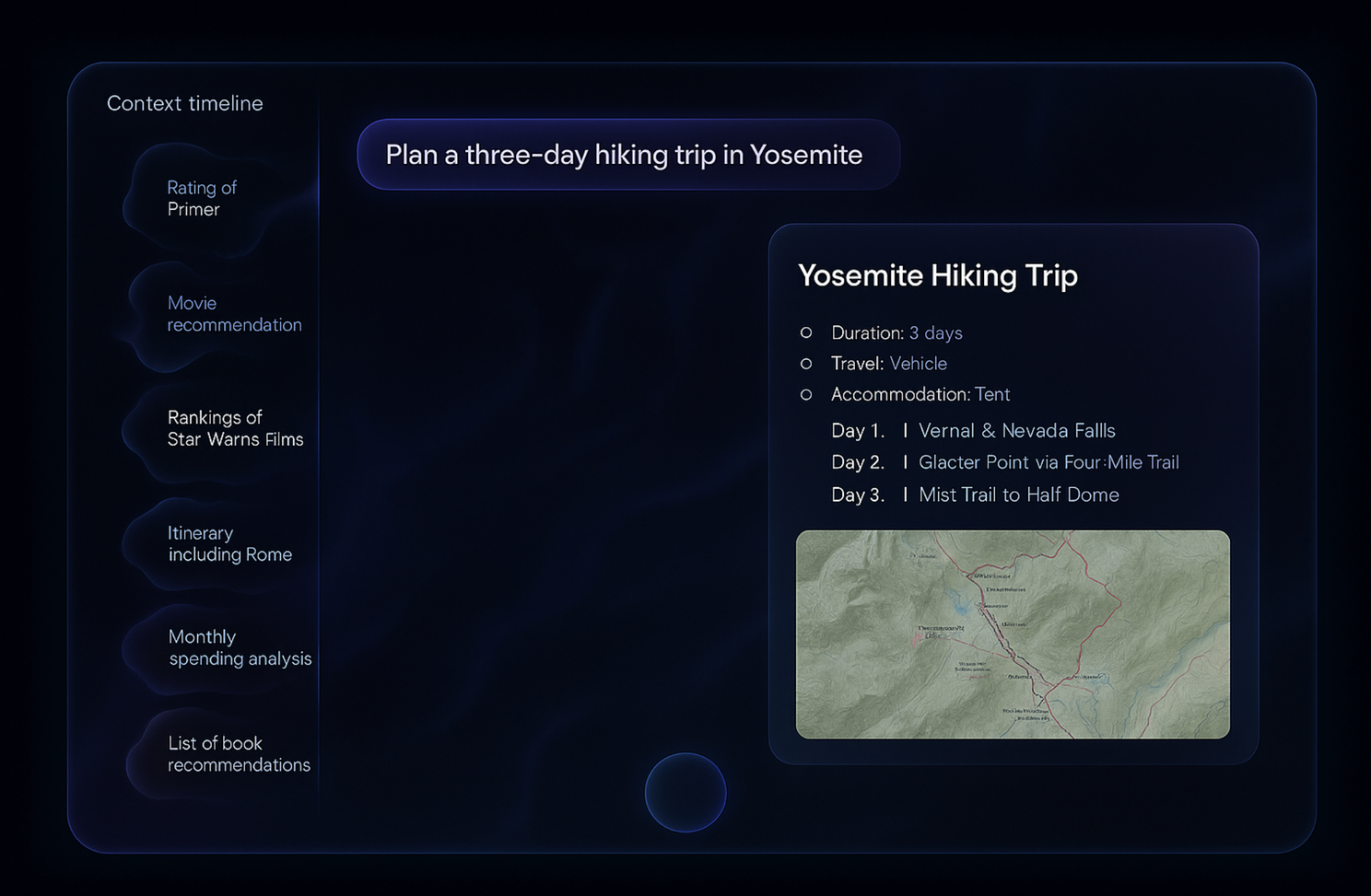

The OS will summon the right agents, assemble the relevant tools, and respond through a dynamic, ephemeral interface — a spreadsheet here, a dashboard there, a summary pane when needed, and nothing when not.

You’ll interact by voice or prompt, not pointer. You won’t navigate apps, you’ll navigate outcomes.

1. The OS will be built around agents, not apps

The legacy app model, where tools live in siloes, will be replaced by a system of ambient, cooperative agents. These agents will share memory, context, and intent across your data and workflows.

You won’t open Salesforce or HubSpot. Instead, you’ll say, “Help me prepare Q3 pipeline insights,” and the system will gather data, generate the narrative, and prep materials, surfacing only the UI elements you need to act.

2. Hardware will serve the interface, not the other way around

Just as smartphones collapsed the need for separate cameras, GPS devices, and music players, OpenAI’s new device ecosystem will collapse your digital needs into a modular, intent-driven computing layer.

At its center: a reimagined set of wearables. Sculpted over-ear (with LLM on the edge) headphones – voice-first, always listening, private by design – become your primary input and output device.

Paired with smart glasses (Gaze), ultra-lightweight and HUDcapable, they offer holographic, in-lens, contextual information without disruption:

Both connect wirelessly to a small AI “core” in your pocket; a compute node that stores memory, orchestrates agents, and stays in sync across modalities.

The result? You speak. The AI listens. The UI adapts. And your computing experience becomes continuous, ambient, and coordinated.

Not just across apps, but across reality. This is the shift from devices that host apps, to devices that host agents.

3. Memory will become the new home screen

Forget icons, folders, and launchers. The center of this experience will be your long-term memory stream – a persistent, contextual, searchable layer that remembers everything you’ve written, said, decided, or discussed.

You’ll ask, “What did the CFO flag in last quarter’s board prep?” and the system will retrieve the note, summarise the concern, and show you how it’s been addressed – all within the same flow.

4. Language becomes the UX – and UX becomes invisible

Instead of menus and tabs, the interface will respond to intent. You’ll say, “Visualize our churn rate by region with notes,” and see a chart with commentary appear over your workspace.

The UI will be temporary and task-specific (eg. a writing pane, a data explorer, a strategic board) and will disappear once the job is done. No clutter. No friction.

Just clarity, on demand.

5. The endgame: From assistant to operating system

ChatGPT, Codex, and Sora aren’t just impressive products; they’re early indicators of what’s coming. Together, they form the basis of a new kind of OS, one where conversation is the core protocol, agents are the new apps, and memory is the new filesystem.

OpenAI isn’t just building software. It’s building the nervous system of work and life. Something that listens, learns, adapts, and acts with you.

The real play is an AI OS that integrates reasoning, input, memory, and execution. Google, Anthropic, and xAI may compete on models, but only OpenAI has the unified depth across interface, agent architecture, productivity memory, and developer APIs.

The GUI gave us the desktop. Multitouch gave us the smartphone. This next leap is ambient, agentic, AI-first computing, and it’ll give us the interface to everything.

You’ll invoke outcomes, not launch apps.

Strategic moves for product leaders

I guess you’re thinking – so what does that mean for me and my SaaS app?!

This isn’t the time to be clever. It’s time to be clear. The interface is changing and if you're building products in this new reality, you must ask:

- Does your product produce high-value, decision-grade signals?

- Can it be accessed and reasoned over by agents – not just humans?

- Is it built to feed, cooperate with, or be embedded inside this agentic layer?

HubSpot didn’t just build an integration. They built an MCP connector and made their product native to ChatGPT’s interface. If you aren’t present in the AI’s interface layer, you’re absent from the user’s intent path. You’re building synapses into an agentic nervous system.

To adapt:

- Expose data and derived insights: If your product surfaces high-value decisions, make it readable by agents. Signals matter.

- Build native connectors: Integrate using OpenAI’s MCP spec or similar; be agentinvokable.

- Structure your docs: Schema-aligned, queryable, permission-aware help content becomes memory.

- Design for AI co-pilots: Assume an agent assists each user; design onboarding accordingly.

- Instrument for agent feedback: Let agents track deltas, context changes, and intent resolution.

- Rethink pricing: You may charge by actions or usage; not just seats.

- Make it promptable: Every feature should be expressible in natural language.

- Optimise for AI power users: What does your UI look like to an agent with perfect memory?

- Teach prompt and RAG fluency: Teams must understand the new workflows— prompts are the new API.

- Build niche, not general: The broader and more generic your tool, the more likely the AI OS eats it.

Closing thoughts

This isn’t the end of software.

But it’s the end of software as a destination.

The interface to everything is here; conversational, ambient, invisible. Your product either supports it – or gets subsumed by it.

Welcome to what’s next.

Follow us on LinkedIn

Follow us on LinkedIn