You’ve nailed the demo. The champion loves it. The business case is solid. And then someone forwards your deal to the security team, and it goes quiet for six weeks.

I’ve been on the other side of that silence. As an AI security product leader, I’ve spent the last several years advising Fortune 500 CISOs on exactly what they evaluate when a new AI product lands in their review queue.

What I see, repeatedly, is that the deals that stall aren’t killed by bad products. They’re killed by product teams that treated the security review as a late-stage obstacle rather than a design constraint from the very beginning.

Here is the practical guide I wish more product teams had before they built their enterprise motion. If you’re building an AI product that touches enterprise workflows, sensitive data, or any function that a CISO oversees, this is for you.

Why AI products get stuck in security reviews

Enterprise security teams aren’t trying to kill your deal. They’re trying to protect their organization from a category of risk that has gotten significantly more complex in the last two years. AI products introduce concerns that traditional SaaS procurement reviews weren’t built to evaluate:

- What data does the model train on?

- Can outputs be audited?

- Can an employee manipulate the AI’s behavior through its inputs?

- Does the product create new pathways for sensitive data to leave the environment?

The security teams asking these questions aren’t being obstructive; they’re being responsible. The problem is that most AI product teams have no idea these questions are coming until they’re already in the room, scrambling for answers that should have been documented months earlier.

The result is a predictable deal pattern: strong bottom-up adoption, enthusiastic champion, stalled at security review, deal pushed to next quarter, champion loses momentum, deal dies. It’s not a sales problem. It’s a product and GTM design problem.

Who is actually in the room

Before you can design for the review, you need to understand who conducts it. Enterprise AI procurement isn’t a single conversation. It’s a sequential set of evaluations by stakeholders with different mandates, different vocabularies, and different definitions of “good enough.” Here’s what you’re actually navigating:

The most common mistake product teams make is treating the security review as a single evaluation by a single stakeholder. It isn’t. It’s a relay race, and each baton pass is an opportunity for the deal to drop.

The pre-review checklist: What to build before you pitch to an enterprise

The following items should exist before your first enterprise conversation – not just before the security review. Enterprise buyers talk to each other, and nothing accelerates a deal faster than a champion who can hand a clean security package to their IT team on day one.

1. A plain-language data flow document

Write a one-page document that answers: What data enters your product? Where does it go? Who can see it? How long is it retained? Is it used to train models, and if so, how? This document will be passed around by people who are not technical. Write it for them.

2. A security FAQ (before they ask)

Proactively answer the twenty questions every security team asks. Is data encrypted in transit and at rest? What encryption standard? Do you support SSO/SAML? What is your access control model? Do you have a penetration test report? What is your incident response SLA? Do you have SOC 2 Type II? Is a Data Processing Agreement (DPA) available?

Every question you can answer before it’s asked shortens the review by weeks.

3. A trust center or security portal

Companies like Vanta, Drata, and Secureframe make it easy to build a self-serve security portal where enterprise buyers can access your compliance certifications, pen test results, and policy documentation without waiting for a sales engineer.

If you have SOC 2, ISO 27001, or HIPAA compliance, put it somewhere public and easy to find. If you don’t have these certifications yet, start working toward SOC 2 Type II. It’s the most common enterprise prerequisite, and its absence is one of the most common deal killers in the mid-market.

4. A data processing agreement (DPA) ready to sign

A DPA is a contractual agreement that specifies how you handle customer data as a data processor. For any enterprise buyer operating under GDPR, CCPA, or similar regulations, it’s non-negotiable. If you make a legal team wait three weeks to receive a DPA, that is three weeks of deal velocity lost. Have a standard DPA ready to send on the same day it is requested.

5. Configurable data controls

Enterprise buyers do not want to take your default configuration. They want to configure what data your product can access, what it can store, and what it can act on. If your product does not expose these controls, the security team will assume the worst-case configuration, because that’s what they’re trained to do.

Role-based access controls, audit logging, and the ability to restrict AI functionality by user group or data category are not enterprise nice-to-haves. They are table stakes.

The three things that actually kill AI deals in security reviews

Beyond the checklist, there are three patterns I see repeatedly that kill otherwise strong AI deals late in the security review cycle:

1. Model training ambiguity

If your product uses customer data to improve models, or if your privacy policy is ambiguous about whether it does, the security team will assume it does, and they will flag it. Be explicit. If you don’t train on customer data, say so clearly, contractually. If you do, explain the opt-out mechanism. Ambiguity is treated as a red flag, not a nuance.

2. Inability to explain AI decisions

For any AI feature that influences a consequential decision – a recommendation, a classification, a generated output that a human will act on – the security and compliance team will ask: how do we audit this? If the answer is “you can’t,” that’s a serious problem in regulated industries. Building even basic output logging and the ability to trace AI recommendations back to inputs isn’t just a compliance feature; it’s a trust feature.

3. No incident response clarity

What happens if your product is involved in a data breach or a significant AI error? Who does the customer call? What’s your SLA for notification? What is your remediation process? Most product teams have never written an answer to these questions because they have never had an incident.

Enterprise security teams evaluate products as if an incident is inevitable because, at scale, it is. Having a documented incident response process, even a simple one, communicates maturity. Not having one communicates risk.

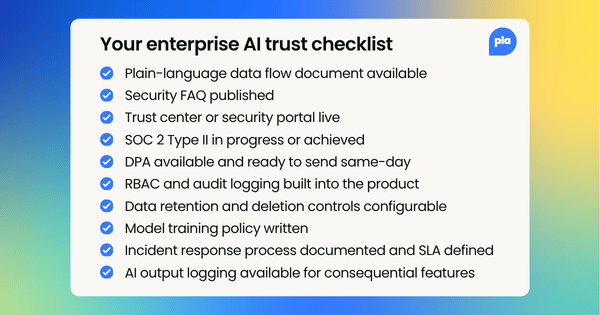

Quick reference: Your enterprise AI trust checklist

✓ Plain-language data flow document written and available

✓ Security FAQ published (20+ questions answered proactively)

✓ Trust Center or security portal live with certifications

✓ SOC 2 Type II in progress or achieved

✓ DPA available and ready to send same-day

✓ RBAC and audit logging built into the product

✓ Data retention and deletion controls configurable

✓ Model training policy written and contractually clear

✓ Incident response process documented and SLA defined

✓ AI output logging available for consequential features

⚠️ Not yet SOC 2 certified? At a minimum, complete a self-assessment and document your security controls. Many enterprise buyers will accept this during a pilot if you have a committed timeline to certification.

⚠️ Don’t have a legal team? Use a template DPA (Standard Contractual Clauses are a good starting point for GDPR) and have it reviewed before your first enterprise pitch.

How to use security readiness as a GTM differentiator

Here’s the mindset shift that changes everything: most of your competitors are not doing this. The bar for enterprise AI security readiness is low enough that completing the checklist above puts you in the top tier of vendors a security team has reviewed this quarter.

That means you can lead with it. Add a “Security & Compliance” page to your website. Reference your SOC 2 certification in your pitch. Include your Trust Center URL in the first email to a new enterprise account. When a champion says, “I need to loop in our security team,” say, “Great, here is our security package. It’s designed to answer all their questions.”

The product teams that have done this work don’t just win deals faster. They win deals that their competitors lose at the security review stage. In a market where AI products are proliferating rapidly, and enterprise buyers are increasingly cautious, that’s a durable competitive advantage.

Follow us on LinkedIn

Follow us on LinkedIn