When I first brought AI into my product workflow last year, I thought it would just save me a bit of time. That’s it. What I didn’t expect was how much it would reshape the way we make decisions.

I still remember one of our quarterly business review meetings – usually a long debate of gut feelings and half-baked metrics. This time, though, the room felt different. AI had surfaced patterns we hadn’t even considered. Suddenly, instead of arguing over hunches, we were digging through real insights together.

That was when it clicked for me: AI wasn’t just another productivity hack. It was changing what it even means to be a product manager.

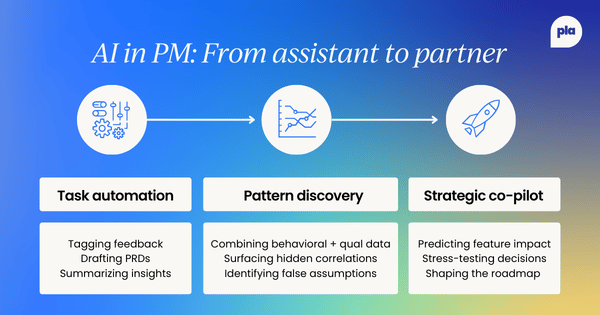

From task automation to strategic partner

At first, my use of AI was pretty basic. We’d send user feedback to Gemini to help us sort it into themes, or get Claude to draft early versions of product requirement documents. It shaved hours off repetitive work and freed up headspace, which was nice, but nothing earth-shattering.

Then we got curious. Instead of just tagging feedback, we started feeding it user behavior data alongside complaints to see what patterns popped up. We ran feature requests through models that also considered engagement numbers, support ticket volume, and even revenue projections. That’s when things got interesting.

It turned out that the loudest requests for a specific new dashboard weren't coming from our power users, as we assumed, but from users who were struggling to find existing features. The model identified a correlation between these requests and "rage clicks" on the navigation bar. We didn't need to build a new feature; we just needed to fix our navigation. We would have spent three sprints building the wrong thing without that insight.

Moments like that have completely changed how we build products. We used to lean on stakeholder opinions, intuition, and a smattering of data. Now AI can sweep through huge datasets, find subtle patterns, and point us toward options we wouldn’t have seen coming.

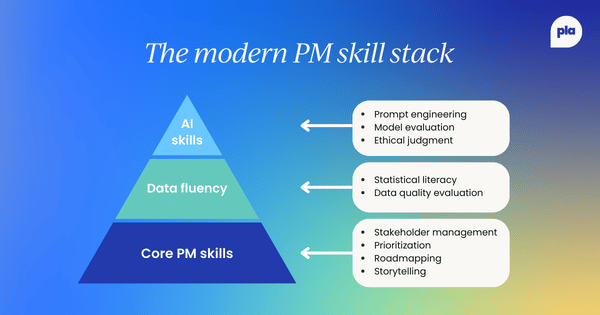

Expanding the PM skillset

This shift changed what we need to be good at.

Product managers used to be generalists. Now, I spend as much time thinking about prompt engineering as I do writing user stories. Framing a question in a way an AI model can actually understand our context has become half the job. A vague prompt gets you fluff. A precise one can spark real progress.

Model evaluation is another new muscle. If an AI system says Feature A will outperform Feature B, I want to know what data it used to make a decision, how confident it is, and where the blind spots might be. I don’t need to be a data scientist, but I do need enough technical literacy to ask the right questions.

Data literacy isn’t new for PMs, but the stakes are way higher now. We’re looking at datasets too massive to parse by hand (customer segments, usage patterns, market shifts) all at once. Understanding statistical significance, correlation vs. causation, and data quality is no longer “nice to have.” It’s survival.

And then there’s ethics. This used to be an afterthought; now it’s front and center. When our AI suggested optimizing a notification algorithm to maximize 'time in app,' we realized it was prioritizing addictive behaviors over user utility. We had to stop and ask: just because we can spike engagement this way, should we? Will users eventually resent the product? Those conversations are messy, but they matter.

None of this replaces the old PM skills – it stacks on top. Stakeholder management now includes explaining machine learning outputs to executives who've never seen a confusion matrix. Storytelling involves translating model results into something humans can relate to. Even empathy looks different: you’re trying to understand how people feel about experiences partly shaped by algorithms.

New collaboration dynamics

AI has also changed how our teams work together.

My relationship with engineering used to revolve around requirements handoffs and sprint planning. Now we build AI prototypes side by side, tweaking models that directly shape the user experience. It feels less like throwing specs over the wall and more like running an ongoing lab experiment together.

Design collaboration has become far more experimental. Instead of debating design principles, we spin up hundreds of AI-generated onboarding flows, test them with small cohorts, and see what sticks. Something that used to take months of incremental iteration now takes weeks.

Even our data scientists feel more like creative partners now. We spend hours arguing about feature engineering, training data quality, and model metrics. We’re not just consuming their output. We’re co-producing it with them. It’s messy and fun and a little exhausting.

All of this has nudged our culture. The old approach of long specs and five-year roadmaps is giving way to high-velocity experiments. AI lets us test ideas quickly, pivot even faster, and occasionally stumble into discoveries we never planned.

It’s frantic, but the quality of the insights is worth the chaos.

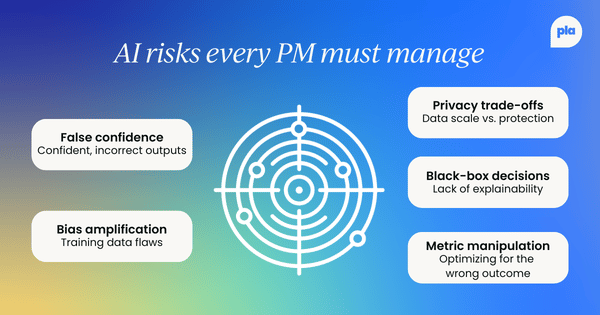

Risks and responsibility

Of course, with more power comes more ways to mess things up.

AI can be wildly confident and dead wrong. I’ve learned to stay skeptical: every AI suggestion now goes through multiple data checks. Once, a model insisted a certain feature was loved by users based on engagement metrics. It turned out they were stuck in a bug loop that forced them to keep using it.

Bias is another headache. Models pick up the flaws of their training data, and if you’re not careful, they can quietly amplify unfair treatment of certain user groups. We’ve had to run bias audits and pull in outside perspectives just to catch what we miss.

Data privacy is its own beast. AI thrives on massive datasets, but collecting that much information raises serious privacy questions. We’ve started using techniques like differential privacy and federated learning just to strike a balance.

Then there’s the black-box problem. If a model’s decision-making is too opaque, it’s risky to trust. I push for explainability even if it means giving up a bit of predictive accuracy, because if we can’t explain a decision, we can’t defend it.

At the end of the day, the responsibility for decisions still sits with us, not the AI. It can process information and offer options, but only humans can weigh trade-offs, understand context, and own the outcome. That’s why we now have clear decision frameworks, model audits, escalation paths for sketchy AI outputs, and cross-functional reviews for big AI-driven changes.

The goal isn’t to eliminate every risk – it’s to see them clearly and handle them responsibly.

Follow us on LinkedIn

Follow us on LinkedIn