Are you still treating AI as a glorified search engine, or are you ready to let it actually do the work for you?

While the last few years have been defined by generative AI’s chat interface, the next phase will be defined by action. We’re moving from models that simply provide information to agents that autonomously execute complex workflows.

In this article, you’ll discover the fundamental shift from generative AI to agentic AI, the core architectural components required to build agents, and the practical and strategic considerations – from cost management to ethics – that enterprise leaders must address to scale these solutions effectively.

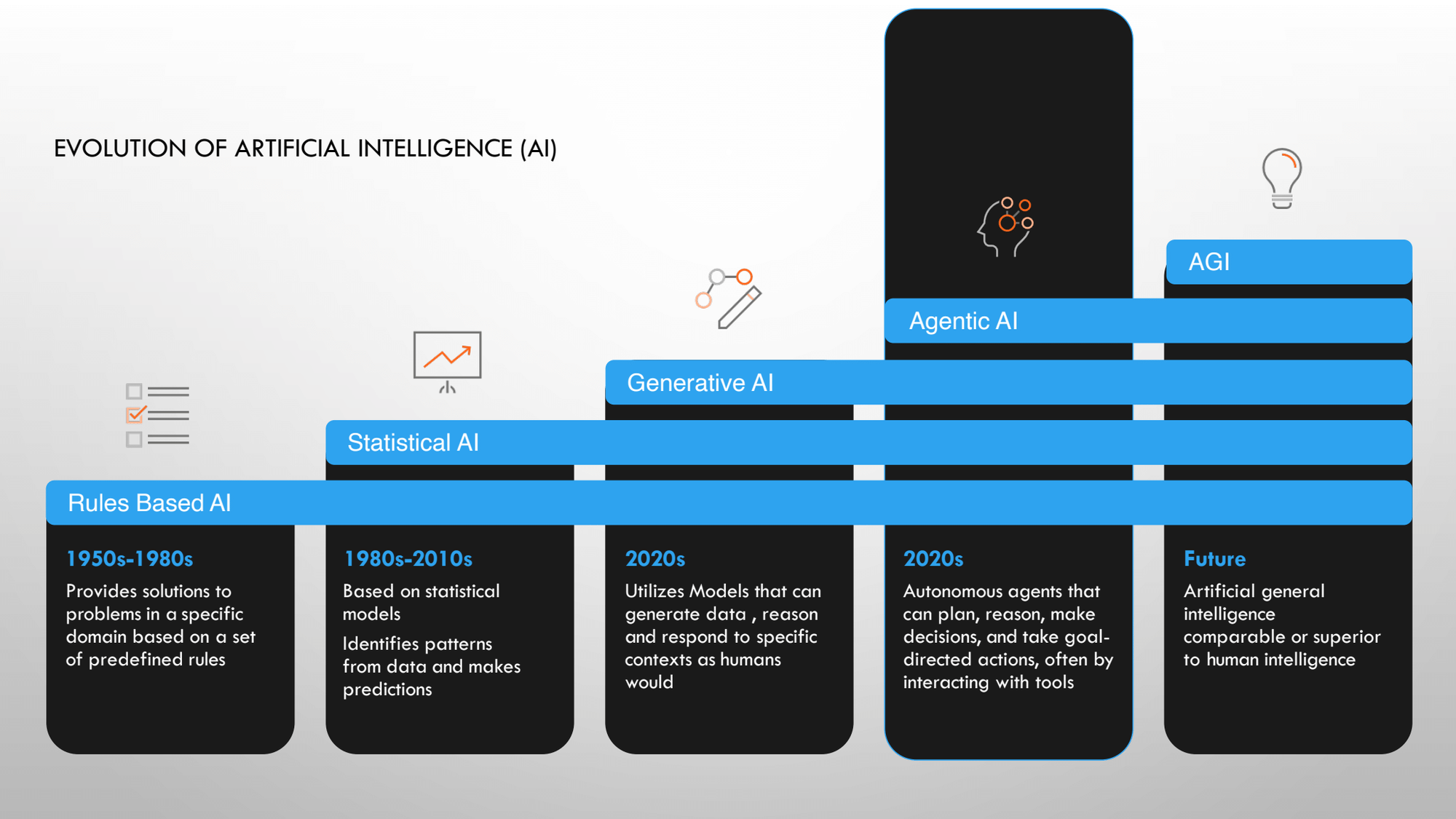

Understanding AI terminology

Before we dive into the practical stuff, we need to get on the same page about what we're actually talking about. AI can feel overwhelming, so let's break it down chronologically to see how we got here.

Back in the 1980s, we had decision management systems. Simple “if-then” statements. You'd be surprised how many bulk systems in financial services still operate this way. We're working to modernize them, but that's the reality many of us face.

Then came predictive AI. This includes statistical AI and machine learning models that use past data to predict future outcomes. Think about those Amazon recommendations or Netflix suggestions. They're fundamentally using this technology to predict what you might want next.

More recently, generative AI burst onto the scene. ChatGPT made it mainstream, and at its core, it started by predicting text. When you type "Amreen is a superstar at," it predicts what comes next based on internet training data. Since launch, it's evolved to reason through complex problems and break them down in sophisticated ways. This is where your large language models like Gemini 2.5 live.

Agentic AI takes things further. Rather than just making recommendations, it actually does things for you. You give it a goal, and it figures out iteratively what needs to happen to achieve that goal. It learns, adapts, and delivers the work.

The key distinction? Complexity. Agentic AI thrives in complex scenarios where simple predictions won't cut it. Most problems in our products can be solved with statistical, predictive, or generative AI. You don't always need agentic AI. But when you do need it, the complexity of the problem justifies the investment.

Then there's AGI (artificial general intelligence), which remains mostly theoretical. Big tech companies are chasing the dream of AGI that can handle all human activities better than humans. Meanwhile, agentic AI excels at specific tasks like booking meetings or flights.

For expert advice like this straight to your inbox twice a month, sign up for Pro+ membership.

You'll also get access to 10 certifications, a complimentary Summit ticket, and 100+ tried-and-true templates.

So, what are you waiting for?

How agentic AI works

Let me walk you through the architecture of an agent to make this concrete. Picture this: a user makes a request, and eventually, they get an output. But what happens in between?

First, you have an orchestrator. This could be simple Python code that acts as the conductor. It talks to the LLM (your ChatGPT, Gemini, or similar) and says something like, "You are Amreen's personal secretary, and here's what you need to do."

The LLM then has access to several key components:

- Tools are specialized functions that the agent can use, for example, a calculator. Here's something that might surprise you: LLMs are trained on internet text data, not calculations. Try asking ChatGPT, “What’s 562,894 plus 220,978?” It'll give you something close but not the exact answer. So, we give agents access to calculators for math, APIs for data retrieval, and so on.

- Retrieval provides access to databases. At Mastercard, for instance, we have cardholder data, transaction histories, and more. If you want insights from this data, the agent needs proper access through retrieval mechanisms.

- Memory gives the agent context. When you interact with the same analytical tool daily, it learns how you communicate and what you typically need. Current ChatGPT sessions maintain context within a conversation, but close the session and start fresh? That memory vanishes. Agents, by design, maintain persistent memory and context.

All these components working together create an agent with AI capabilities. The LLM is just one piece of this larger system.

Follow us on LinkedIn

Follow us on LinkedIn