I lead growth at Productboard where we make Productboard Spark, the only AI built for product managers. I spend most of my days talking with product leaders about what's changing in how we build with AI. The same question keeps coming up in almost every conversation.

Do we still need product managers?

You've seen the LinkedIn posts, the hot takes, the tweets. Coding agents are turning requirements into pull requests overnight. So, in a world where you can build almost anything instantly, do you still need a product manager (PM)?

My answer is emphatically: yes. But what the job actually looks like day-to-day is changing fast. If we don't change with it, we'll get left behind, not because AI replaces us, but because the person who is AI-fluent will. We become the slowest part of the system. And there’s no slower part of the system than a PM’s favorite job…writing a spec.

Everyone's saying the spec is dead. Prototyping is all the rage. But you still have to tell an AI agent what to build, in natural language. Call it a prompt, instructions, or a brief…it's a spec. A spec is just a set of instructions. To prototype anything, you have to define what the agent should build. Specs matter more now than ever. We’ll go over how to take advantage of that shift.

Product management was never really simple

If you take a step back, product management is a cycle: understand, curate and decide, orchestrate and ship, evaluate. I.e., You figure out what to build, you execute the vision, and you measure if what you built made an impact.

Simple in theory. As you all know, it's anything but simple in practice.

To get to the decision part, you have to understand the company strategy, which might live in a Wiki, Confluence, or Notion. You have to understand what your customers are saying by going through feedback scattered across Zendesk, Slack, email, Gong, and probably five other systems inside your own company. You have to understand how people actually use your product through analytics in Amplitude, Hex, Looker, or some homegrown tooling. And then you have to research the broader market and competitive landscape through AI tools, Google, and review sites.

That's not even an exhaustive list. Those are just a subset of things that go into making one good decision.

On the build side, there are the dreaded alignment meetings. Feedback discussions. The leadership conversation about what makes the next quarter's roadmap. Collaboration with design on what you're actually going to build. Then the actual build with engineering, which has gotten orders of magnitude faster with AI.

And finally, who cares about your product if nobody knows how to use it? You need to enable the go-to-market engine, whether that's through a sales team, channel partnerships, or a self-service motion. All that enablement content needs to be created, internalized, and used.

Maybe product management isn't so simple after all, but you already knew that.

What AI actually changed

Engineering teams are using coding agents like Cursor and Claude Code to ship faster than ever. The build has gotten dramatically faster, which means the decide side, the side that you, as a PM, own, is either the bottleneck already or about to be.

Most of you reading this probably knew that too.

The problem is that speed without clarity is just wasted effort. A coding agent that can ship in hours is incredible if it's building the right thing. If it's not, you've just generated tech debt at the speed of AI.

This is what we mean by specs for the agentic age. The bar on spec quality has moved from "clear enough for a human engineer" to "complete enough for an agent that won't ask follow-ups."

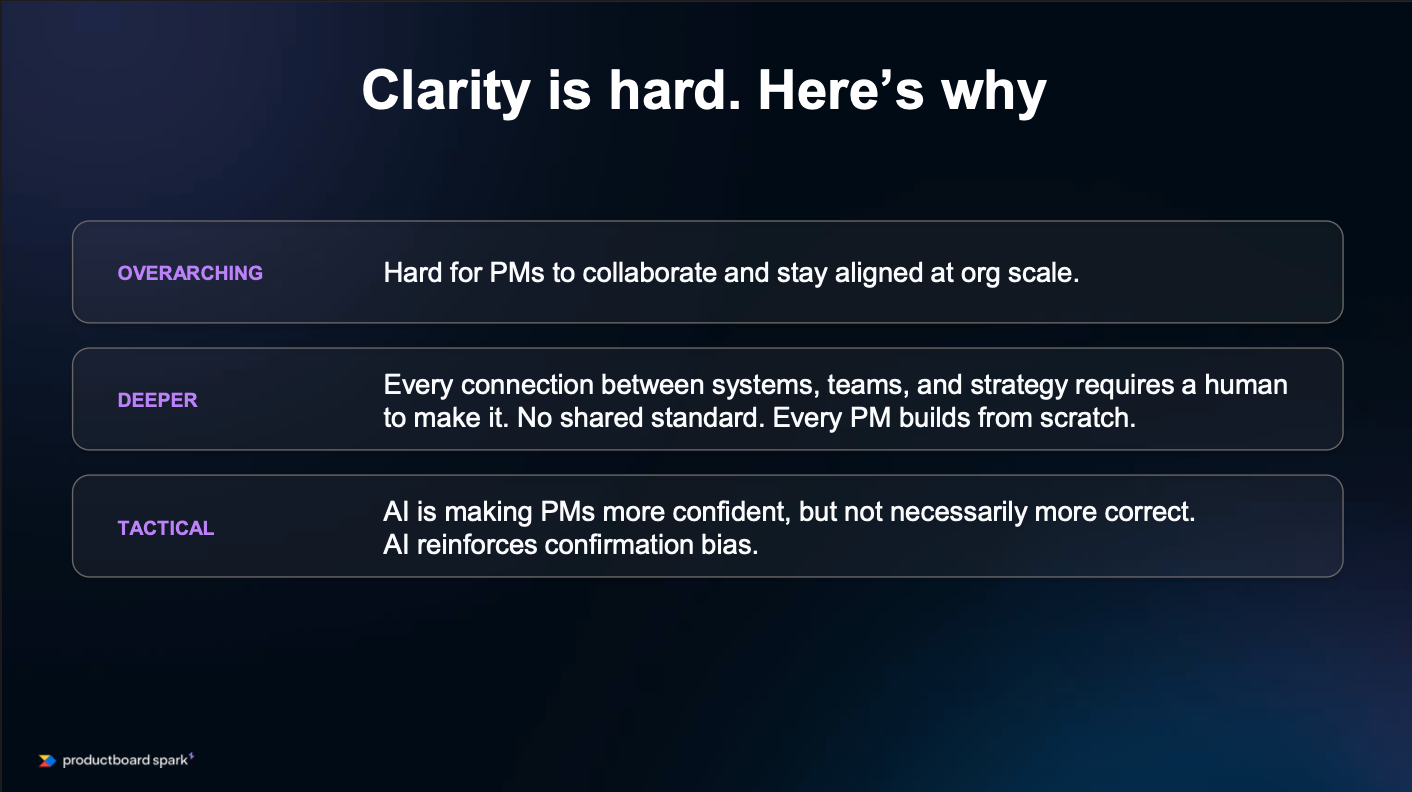

Why is driving clarity so hard?

At the highest level, it's hard for product managers to collaborate and stay aligned at an organizational scale. The bigger you get, the higher the tax for working alongside other humans, no matter how good your tools are. That's nothing new.

A layer deeper, every connection between systems, between teams, and strategy has historically required a human to make it. There's no shared standard for how knowledge is connected inside an organization. Every PM is building from scratch. Knowledge is siloed, PMs are traversing six different tools to make a single decision, and when the person who's been there for five years leaves, all that knowledge tends to go with them.

This information collection overhead is the Context Tax that PMs are paying every single day just to get the right information to make a decision or execute on it.

At the individual level, AI is making PMs more confident but not necessarily more correct. AI accelerates confirmation bias.

How does a junior PM know they're drawing the wrong conclusion when the AI agent tells them they're “absolutely right” about everything they write? AI tells us we're right more often than it challenges us critically.

The scarcest resource in an AI-driven org

The scarcest resource in an AI-driven organization is not execution speed. We already have that. The scarcest resource is judgment. Knowing what to build, what to cut, and what right actually looks like for your customer and your market.

That judgment has always lived in the spec, or at least it should have. If the PM writing the requirements doesn't have full clarity on the what, the why, and the how, the spec is fundamentally broken. And I think we all know it has been for a while.

With all the information created over the years across all the systems that collect our data, it's been almost impossible for PMs to fully pay the Context Tax and reach full clarity. The system has always been broken. AI just made it more obvious, faster.

Prototyping a bad idea quickly with AI tells you it's bad faster. It doesn't help you build the right thing from the start. A spec written with a full understanding of market dynamics, customer needs, and technical considerations means that working with coding agents can deliver customer value nearly instantly.

That's why the spec is more important than ever.

At this point, it’s widely accepted that context is king in the world of AI. The spec is a living artifact of that context.

Most PMs are cutting corners when it comes to context. They paste a prompt into ChatGPT and ask it to draft a spec. You've probably worked with someone who cranks out content that's clearly AI-generated, and reading it is exhausting. That approach is a fancy autocomplete, not AI-powered product management.

PMs take this approach, then complain that AI is overhyped, when really it's underfed and underused. We haven't given it the right context. You have to build a sustainable context layer to maximize the power of AI.

What the context layer actually is

Before getting tactical, here's what the context layer needs to look like to generate high-quality output.

The context layer is a curated, connected ecosystem of organizational knowledge that agents draw from to do useful work. At its core, it generally breaks into five categories.

- Strategy and direction: Where are we going?

- Customer signal: What are people telling us?

- Team norms: What does good output look like in your organization?

- Competitive context: What's happening in the market?

- Institutional memory: What have we tried, what have we learned, and how do we avoid making similar mistakes in the future?

Most teams I talk to have at least some of these in decent shape. Usually, some customer feedback, some strategy docs, maybe a persona set. Others have it scattered, stale, or non-existent.

How can you expect a brand-new product manager to give you a rock-solid spec if they don't have access to this information? That's exactly what you're asking an LLM to do when you ask it to draft a spec without the right context, and then complain that the output is generic.

The context layer is a core part of the new intelligence system for product management.

Writing a product specification the old way vs. the new way

Here’s an example of how the process for writing a spec is evolving. I want to ship a new onboarding experience in my product. This is near and dear to me as a growth leader because it's what my team works on every day.

The old way

Historically, here's what I'd do:

First, I'd go through all the different feedback channels to understand pain points users experience getting up and running. What's confusing? Where's the friction? Then I'd look at funnel data to reconcile what users are saying with how they actually behave. Where are the drop-offs? Where are users getting stuck? How do I merge the qual and the quant?

Then I'd check our strategy docs and OKRs to make sure the segment I'm prioritizing is actually the one that matters to the business. Not all feedback is created equal. After that, I'd look through previous onboarding iterations and sign up for competitors' onboarding flows to get a sense of common paradigms and what market leaders are doing.

Then I'd write my spec. That's a couple of hours to define the problem statement, requirements, acceptance criteria, and success metrics.

I'd send it along, my engineer might be working with coding agents or might not, and then I'd revise, edit, recirculate, answer questions, do a technical delivery spike on feasibility, and change some requirements. If I'm being generous, that's a two-to-three-day process. Realistically, depending on the scope, longer.

Then, engineering starts building. Product questions and edge cases come up. I'm not sitting shoulder to shoulder with my engineers, so there are delays because we're working async. My calendar is booked, so they can't get a hold of me. The implementation timeline drags on.

Best case, the whole process took at least seven hours just to get to a spec, assuming I knew where to go for all that information. It could easily take a week or two.

On top of that, in a world with AI, some of those questions never even get asked. Engineers move faster, and AI makes assumptions when the spec is missing information. There's more rework on the tail end because AI got things wrong that an engineer would normally have caught and asked about.

The new way

Same feature, but this time with a context layer in place.

My first question to the agent: What are users saying about getting started with the product? The agent scours Slack and call transcripts and surfaces five confusing elements of getting started.

My second question: which parts of our activation funnel seem to correlate with this feedback? The agent looks at Amplitude, merges the qualitative and quantitative insights, and finds lookalike users who aren't giving feedback but are hitting the same friction.

Third, I ask the agent to draft solution options based on the most impactful pain points, taking into account how other best-in-class companies have approached this. Agents can search the web, take screenshots, and use browser plugins to go through onboarding flows. It tailors the learnings to my organization because my strategy, approach, and past decisions all live in a centralized system.

Then I ask the agent to review our codebase. The last thing I want is to propose something only to have engineering shut it down because it'll take months to build. The agent comes back almost instantly with considerations, and I update the spec before I even talk to engineers.

I've done all this in about three and a half hours. The spec is robust, it's helped me think through edge cases I might have missed, and it drives more fruitful conversations with design and engineering. The implementation plan is crisp. AI doesn't have to guess as we push work to coding agents because the details are already there. And ultimately, the shipped experience is high quality from the start.

The Context Tax is gone. I spent my time thinking instead of hunting down information.

What changes when you build better specifications

In this world, product managers make better decisions, not just faster ones. Speed is the obvious win, but the bigger opportunity is decision quality.

There are fewer blind spots

Agents surface things you didn't think to search for. A PM writing a spec about onboarding might not think to check whether the support team flagged something last month that became a friction point. The agent does. The "oh, we're working on similar things" conversation that happens at larger organizations now happens in the work itself, not in a quarterly alignment meeting.

Trade-offs get better

Specs can include a review of the codebase to inform delivery estimates upfront. The quarterly jockeying for engineering resources can be better informed before decisions are made, not after.

There's less context drift

When someone leaves, knowledge doesn't walk out the door. It's accessible for humans and agents to come back to. Tribal knowledge gaps disappear.

Better context leads to better specs. Not because the agent is smarter than you, but because it has access to more relevant information and can surface it instantly.

3 principles for building your intelligence system

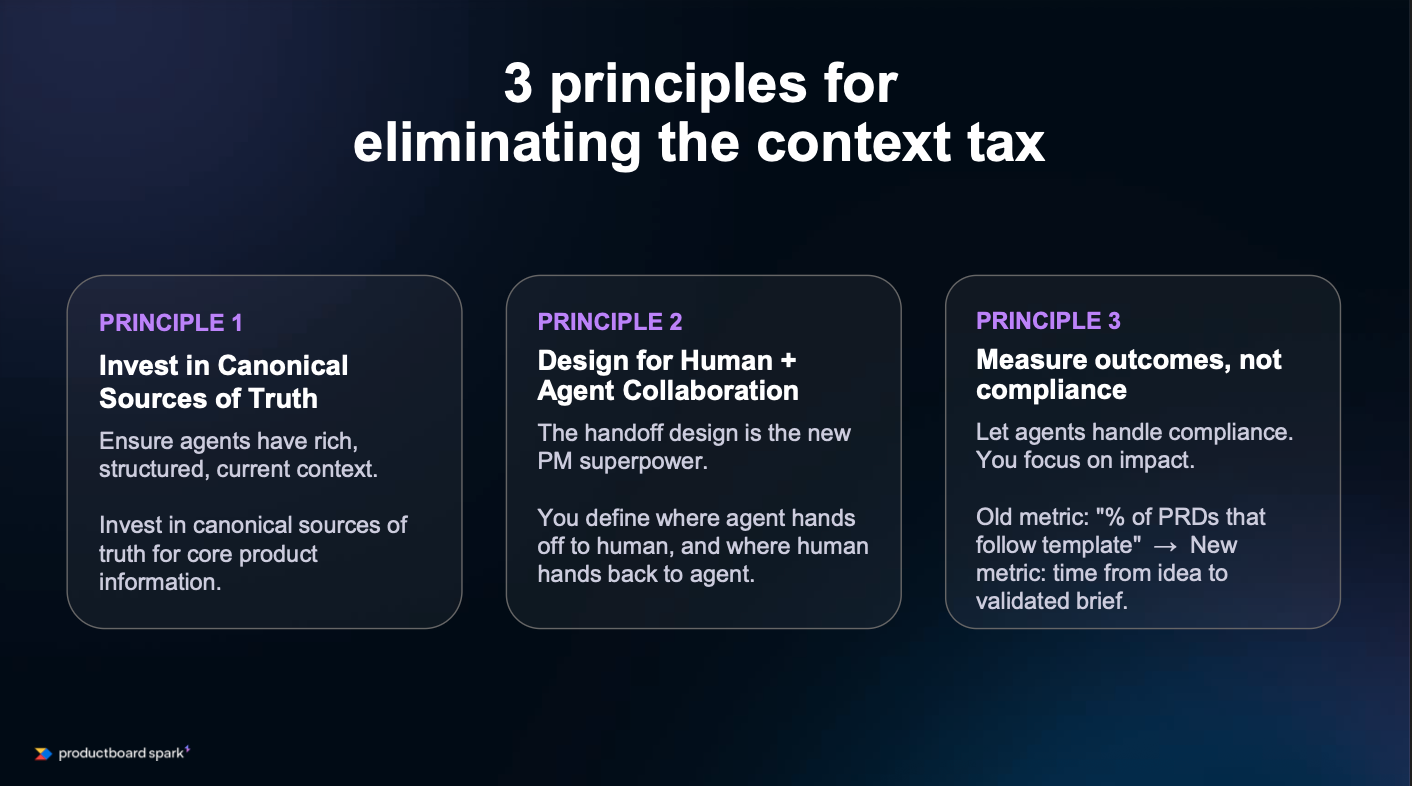

As you build an intelligence system that addresses the Context Tax, keep three principles in mind.

Invest in a collaborative, canonical source of truth

You need sources of truth for core product information: goals and OKRs, product strategy, personas, competitive landscape, and past decisions. Every PM needs these to make a decision. Changes to these assets need to propagate to the entire organization. And a system for these sources of truth that is collaborative reduces the reliance on a single person to keep everything up to date.

Design for human and agent collaboration

Define where you need oversight. Which parts of the product development lifecycle do you want humans focused on, and which parts can an agent help automate?

Measure outcomes, not compliance

As you build agents, monitoring for adherence to a process starts to fade. Plenty of you work alongside product ops people who want a specific template followed and track how many PMs are following the process.

That goes away when the whole thing is automated. Shift your measurement to velocity and impact. How quickly did you go from idea to validated spec? How much impact has a recent feature driven?

5 tactical steps to get started

I've seen too many teams get excited about AI and then get frustrated when it doesn't work. They throw prompts at it and hope it single-shots them into nirvana. The teams getting real results have invested in the underlying context layer.

Step 1: Start small

Too many teams try to boil the ocean. Pick the one part of the spec that's consistently the weakest, or where you're spending the most time paying the Context Tax.

Often, that's customer feedback data or making sure people understand the company strategy.

Step 2: Audit your gaps

This is where the real work starts. What inputs do you need to improve the selected part of the spec? Feedback? Persona info? Company strategy? Codebase? Technical considerations?

Figure out if you have it, if it's accessible, and if it's current. Capture it in a spreadsheet or a Notion doc, whatever works, and keep it lightweight.

Common gaps include feedback scattered across multiple systems, strategy docs that are out of date or non-existent, and persona docs living in some other Wiki.

Don’t skip the audit. It's the most critical step, and you have to invest the time in tracking down or building out the right assets. Without it, AI will just autocomplete versions of ideas you already had.

Step 3: Choose the tool that fits your organization

You can self-manage this with a combination of tools. Claude Code, Notion or Airtable databases, Git repositories. They all provide value, and they all require maintenance.

Many aren't collaborative, which means context stays siloed with individuals. Still better than nothing, which is what most of us have today. Or you can use a purpose-built tool with agentic workflows, native integrations, persistent organizational memory, and team-level standardization.

Pick one, test it, see what works.

Step 4: Start building your first context layer

This isn't a data engineering project. You need integrations between the systems where your context lives and the layer where your agent works.

Customer feedback in different systems? Start with three sources. Use their APIs to push or pull into a Google Sheet and create a central repository. Purpose-built tools exist, but lightweight moves get you started.

Make the information digestible for an agent. Clear headers. Clean sections. Remove unnecessary fluff so you're not spending tokens describing things that don't matter. Add readme files so an agent knows what files it's looking at.

Define what good looks like: a gold standard spec, a great customer feedback analysis. Document it so the agent has reinforcement as it does its own work.

Aim for good enough. Perfection slows you down.

Step 5: Measure the impact

Test it on a single use case, compare it against your baseline, and look at speed and quality. Speed is easy to see. If you can't spot speed improvements, something's wrong.

Quality is harder to spot. Pick a proxy metric and track it. Less rework after leadership review, or a survey on how confident PMs feel about what they're bringing to market.

At Productboard, we built a leaderboard that tracks every feature our PMs deliver. PMs sign up for targets upfront based on the impact a feature is going to drive and the metric it's going to move. We measure that impact weekly for three months.

Every six weeks, we get the whole product team together to review progress. Everyone feels the pressure to deliver value, we gamify it a little, and people compete to be at the top.

As we've built out our context layer, fewer features miss the mark. Fewer features fall short of their target because we're consolidating better information upfront and building things that actually matter.

The role isn't going anywhere

Building and maintaining a context layer is work. Systems integration, agent design, and ongoing maintenance. It's a real investment, and when the next model drops, you need to make sure everything still works. That's exactly why we built Productboard Spark - the only AI built for product managers.

Spark understands your product, your customers, and your market, and gives you the workflows, expertise, and use cases to go from insight to impact faster than ever. It's the home where product teams spend their time, instead of jumping between seven different systems a day.

Native integrations with Jira, Confluence, Zendesk, Gong, and the rest of the PM stack mean context is connected, not manually assembled. Persistent organizational memory means the longer your team uses Spark, the smarter it gets. Context drift goes away.

Team-level standardization solves the every-PM-builds-from-scratch problem, so it isn't just your best PM's AI setup, it's everyone's. And agentic workflows purpose-built for how PMs actually work - from customer insight to brief, from brief to coding-agent-ready spec - close the gap between generic LLM output and decision-ready specs.

One Senior PM recently told us he built a leadership-approved, engineering-ready spec in 90 minutes. Work that would normally have taken him a week and a half.

If you take away one thing from this, here's your homework. Pick your biggest context gap, audit the context layer, and use it in your next spec. Do those three things in the next two weeks, and you'll see exactly where to invest and what you can expect as you work toward a fully functioning context layer.

There's a lot of debate about whether the product manager role is going to disappear. I don't think so. Anything involving judgment, trade-offs, or values stays human.

PMs become curators of taste - the people who decide what "right" actually looks like for their customer and their market, while agents handle the synthesis, the documentation, and the cross-system context-gathering that used to eat the day.

Product management and product specifications aren't dead. AI is just raising the bar on what's expected of both.

Productboard Spark is currently in beta. Check it out.

Follow us on LinkedIn

Follow us on LinkedIn